Generative AI (Adobe Firefly) Comes to Photoshop!

Confession: I have been trying to write a new article about Firefly (Adobe's Generative AI set of tools) for a few weeks now. However, every time I sat down to start this piece, the wizards at Adobe released something new!

Case in point... have you heard the news? It's all over town...people talkin...

Adobe announced May 23, 2023 that Generative AI is now part of the current public Photoshop beta. That's right... Adobe Firefly is now integrated right inside of Photoshop!

Just as the recently-released AI masking was the biggest thing to happen to Adobe Lightroom in more than a decade, Generative Fill is the biggest thing to happen to Photoshop since the introduction of layers. Have a look at this short video below.

A short little timelapse of me adding elements to an existing scene

Pssst....check out this article to learn more about AI in Creative Cloud.

With Generative Fill, you can use the Firefly AI in the Photoshop Beta to add, replace and remove parts of your image. If you want to add something to your image that wasn’t there before, select the area that you want to include it in, click Generative Fill on the NEW Contextual Task Bar, and type in the text prompt that describes the content you want to add to that spot. Generative Fill will then create three versions of your prompt and fit it perfectly into the selected area. If you want to remove something, select it, click Generative Fill but leave the text prompt area blank, and then click Generate.

And while it can do some wild and crazy things, I've been focusing on more realistic/natural applications of this functionality: adjusting a crop by extending the dimensions of an image, filling in missing details, seamlessly removing distracting objects in conjunction with other AI--based tools like the Remove Tool, which is part of both the beta and the current in-market version of Photoshop.

Not only does Generative Fill get the size right, but it also gets the lighting, color, tone, shadows and even reflections to make your new composite look realistic.You can even create a brand new composite scene from a blank canvas! More on that little nugget at the end of this article.

Note:

Funny Story - in late February I was speaking at a local college about some of the ways AI was integrated into Creative Cloud tools, and also teasing a bit about the future. At that time, I had no idea how far along this path Adobe really was.

A week or so later, we announced the Adobe Firefly beta, the first commercially-safe generative AI tool.

The Gen-AI Horse has Left the Hallucinated Barn

Yes, I know; by this point, I've missed most of the hoopla around the generativr AI release in the PHotoshop Beta. But that's ok. This is not a step-by-step tutorial on how do use the new tool; there are at least a dozen video tutorials already out there that cover the basics. In this article, I'm showing you some of my experiments with Generative AI. I wanted to share how I would use Gen-AI and by doing so, I hope you see how it could be yet another valuable tool as a photographer. I will post links to video tutorials by others at the bottom of this article.

Impact on Digital Storytelling

One area that I haven't seen much discussion on is the impact Generative AI can have on Digital Storytelling. Imagine being able to augment your prose with just the right image - realistic or fantastical, based on your own words. I'm personally very excited about the possibilities here.

A couple years ago, I attempted to do exactly this - illustrate a short story using images from Adobe Stock. I think I was pretty successful, but Gen-AI opens up so many more possibilities, particularly for works of fiction. Don't get me wrong; nothing beats a dedicated human artist or illustrator for this work, but if you are self-publishing and your budget is very tight, Generative AI could be the solution when you're just starting out.

Even without the written word, the ability to add elements to existing photographs to tell a different story - or MORE of the story can also be a boon.

Is Seeing, Believing?

And yes, I realize the razor's edge I'm walking here, philosophically. People were already worried about what to believe before the advent of easy-to-use AI technology. What is real? What is the truth of the image? These are important questions to ask as both creators and as consumers of content.

Adobe - and many other companies - have recognized this concern and some time ago formed the Content Authenticity Initiative (CAI), "... a community of media and tech companies, NGOs, academics, and others working to promote adoption of an open industry standard for content authenticity and provenance."

Watch the videos below to learn a little more about CAI.

Learn about the Coalition for Content Provenance and Authenticity (C2PA)

Content Credentials in Firefly and Photoshop

Verifying an Image's Authenticity

Landscape & Nature Photography Workflows

Remove/Replace

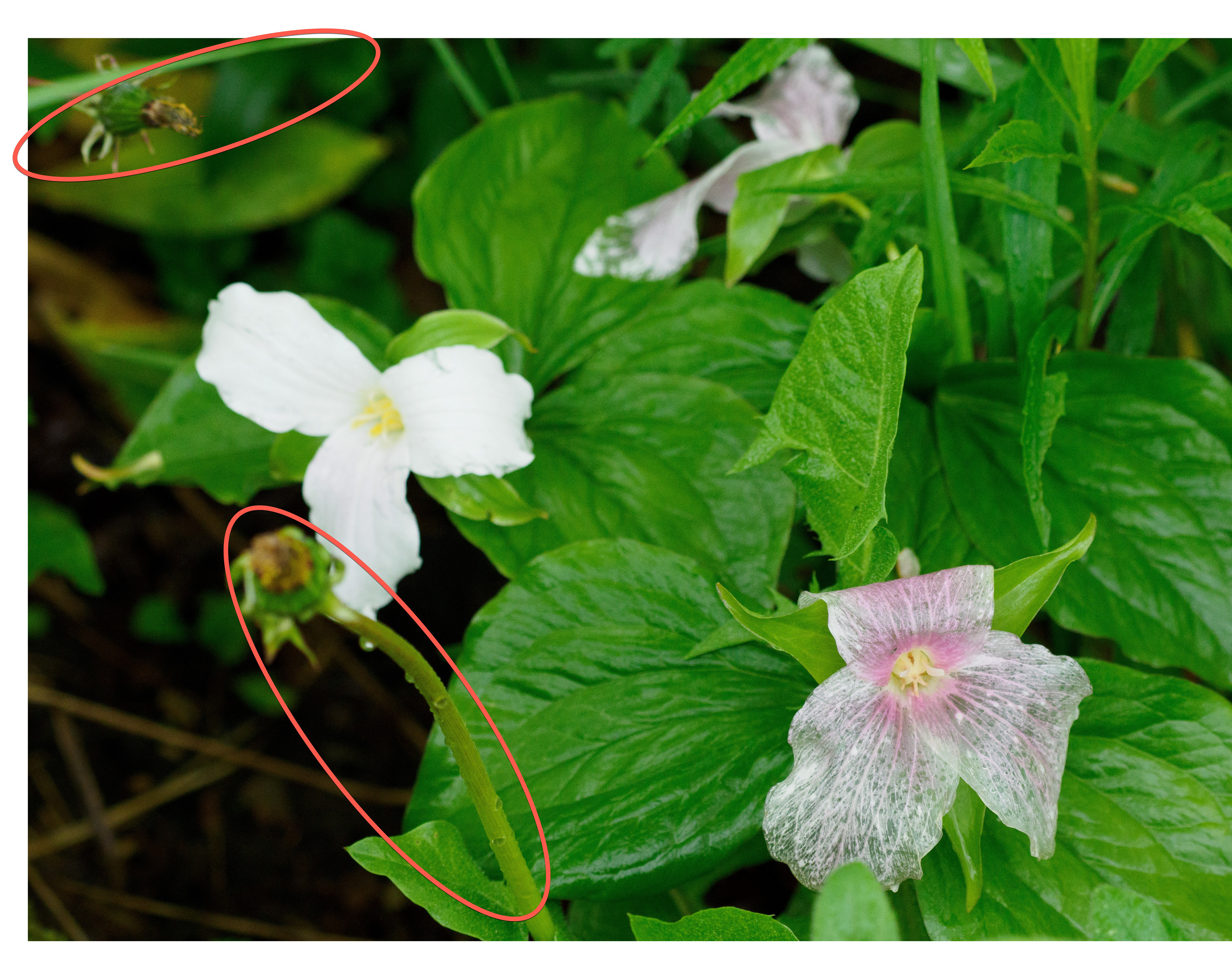

Because of the kind of work I like to do, I am often in situations where I can't - or prefer not to - disturb the area surrounding my subject, but still wish or need to remove a distracting element. Generative Fill (and the new Remove Tool), make it possible for me to capture my subject without damaging any sensitive surroundings.

I can also avoid an accidental dose of poison ivy, in the process.

The alterations I need must be subtle and realistic, as if I were physically able to reach in and move or remove the distracting object. In these first three examples, I'm showing the original image, a version identifying the issues and the final image. Click on any image to enlarge it.

Can YOU spot the changes in this image below?

Add/Extend

The other two important actions I can take with Generative Fill are to add elements to a scene or extend a scene's dimensions.

Timing is everything when it comes to capturing wildlife in motion. I was fortunate enough to capture this swan landing in the water, but one wing was clipped at the edge of the frame, and the bird was very close to the bottom of the frame. I expanded the canvas, selected the empty area (with a bit of overlap) and let Photoshop/Firefly work their magic. I didn't get perfect results in the first generated series, but after a couple more tries, Firefly gave me something that I thought was more than acceptable. Not only did it give me the wing tip, but it generated new water, complete with ripples AND a reflection! All without any form of a text prompt!

Two in-camera variations of the scene.

We were up before the crack of dawn to catch these sunrise photos at Catfish Creek near Lake Superior Provincial Park. And while I did shoot landscape orientation as well as portrait (above images), I could not get back far enough to capture a wide shot like you see below. I wanted to see how Firefly would manage the scene with a fair amount of canvas extension. It did well.

Firefly/Photoshop Generative AI rendition

Another impressive scene extension, although admittedly, Firefly really struggled to create a realistic boat.

I shoot this pond often at sunset. It's a water hazard on the golf course only a five minute walk from my cottage. Again, I wanted to experiment with realistic elements being added to the scene. In this case, the bridge, the rowboat and off in the distance on the left, some deer. The bridge is amazing right down to the color and quality of light being matched. And the reflection! Wow! The boat took several regenerations before I got something believable. And the deer... well, let's just say I'm glad they are small and in a darker background. A friendly reminder that all this technology is currently still in beta.

I thought to myself, "OK, dusk and twilight work pretty well, but what about a bright scene?" Personally, I love the original landscape, devoid of any animal life and only a hint of the barn in the foggy background, but I also felt this could be a perfect test for realistic object generation. The frozen creek looks credible. The old shack - Firefly even recognized the mist/haze and did a great job of mimicking/blending to match the surroundings. The cows ...meh ..those need work, in both the foreground and background.

Update: There has already been an update to the Photoshop Beta and Firefly integration since I started writing this article. I decided to test out the pasture image again, as I'd heard there are noticeable improvements to the AI model. I can say that there is definitely more detail in the generated cows, but they are still not quite right. That said, things are getting better.

Tip: You're not stuck with what is generated. In the scene above, I also added some a small bit of noise to the generated layers to help with blending, and I also tweaked the mask of the herd in the background so more original image came through. I even removed a couple of the less believable cows by altering the mask.

You. Look. Maawvelous!

Just for fun, I thought I'd try out some new hairstyles. The first image is the original, the rest are well, you know...

I also added new content; a stack of books, a camera. I was quite impressed that the stack of books respected the level of sharpness in the background of the image. The camera was a interesting add-in, but not perfect. I went through multiple generations, but never really came up with something I was super happy with. And at the end, I decided to put myself in a location that was more appropriate for my shirt.

Unknown Wilderness

One of the current downsides to AI-generated imagery is the image resolution that these tools produce. And the Generative Fill tool in the Photoshop Beta is no different (currently). The desire to increase the resolution of these assets is not new; it's been around likely since the first Gen AI image was created.

As of this writing, Generative Fill creates a 1024x1024 pixel tile to fill your area. This means that if your selection is a lot larger, image quality breakdown could be very noticeable.

There is a hack, however, if you're will to invest the time. You can extend your canvas as desired, but then create a 1024x1024 selection and fill in the empty area piece-by-piece, as I did with the canoe image above.

The hack takes longer, but the results are much better. I’m very pleased with the end result, so much so that I actually plan on printing this image. And I have no doubt that higher resolution generation is near the top of the list for the engineering team.

People-Free Zone

As you can probably tell, I'm primarily a landscape and wildlife photographer. In general , my preference is NOT to have people in my photos. But when I do - either by choice or circumstance - I often wish later I could remove them. Sometimes I can with the existing tools like the Healing Brush and Content Aware Fill, but Generative FIll and the Remove tool make this process far easier and much faster.

In less than a minute, Generative Fill seamlessly removed these people from the photo.

Image courtesy of Danica Adler

This project took 20-25 minutes and still needs a bit of touch up. But just the fact that I got so far in so little time with such a complex image is - frankly - mind-blowing!

Something From Nothing - Promptgraphy

As if all this isn't amazing - and perhaps unnerving - enough, there's more. You don't even have to start with a photograph. Yes, that's right. The image below never existed, and took about 10 minutes to create from a blank canvas.

Remember earlier I brought up the topic of digital storytelling? Well, feast your eyes on the potential.

Two versions of a non-existent, one where I requested a long exposure.

My text prompts included the following:

- lake with long old wooden dock POV perspective

- alpine treeline

- beautiful sunrise

- old cabin on the lake

- flock of birds in the distance

- old wooden boat in the distance

Each of the above text prompts required that I first make a selection using any of the selection tools. Afterwards I also added an adjust layer, using one of the new Adjustment Presets for landscapes to warm up the foreground.

Below is a very short video showing the elements being generated and then tweaked/cleaned up with the new Remove Tool and a bit more unprompted Generative Fill actions.

I'm reading that there is already a term for this process, to help further differentiate it from actual photography. The two terms being bandied about are Promptography and Promptgraphy. I like Promptography, but it already has a existing meaning.

Closing Thoughts

My first thought is - Wow! Even after a couple weeks of testing/creating/experimenting, the shine of this innovation has not yet worn off. While it can all seem a somewhat overwhelming, I urge you to keep in mind that generative AI is just another tool in your creative toolset. You can choose to use it - or not. But do this intelligently. Educate yourself. In my opinion, you are doing yourself - and possibly your customers - a disservice if you try to ignore it.

I remember deriding digital cameras when they first came out. That revolution didn't happen as fast as generative AI, but it was just as impactful to photography of all kinds. And now, digital cameras are the de-facto standard for hobbyists and professionals.

As this technology gets incorporated into other Adobe tools - Adobe Express, for example, the ability for anyone to tell and share their stories, reflections and ideas in a professional an engaging manner becomes easier and more fun. The tech takes a back seat to the individual's creativity and desire to share.

I hope this article and the videos below, from other accomplished photographers, gives you a sense of the potential of this new tool. I'd love to hear and see what you are doing with generative AI when it comes to photography, so please share in the comments section.

Resources

Terry White

Glyn Dewis

Anthony Morganti